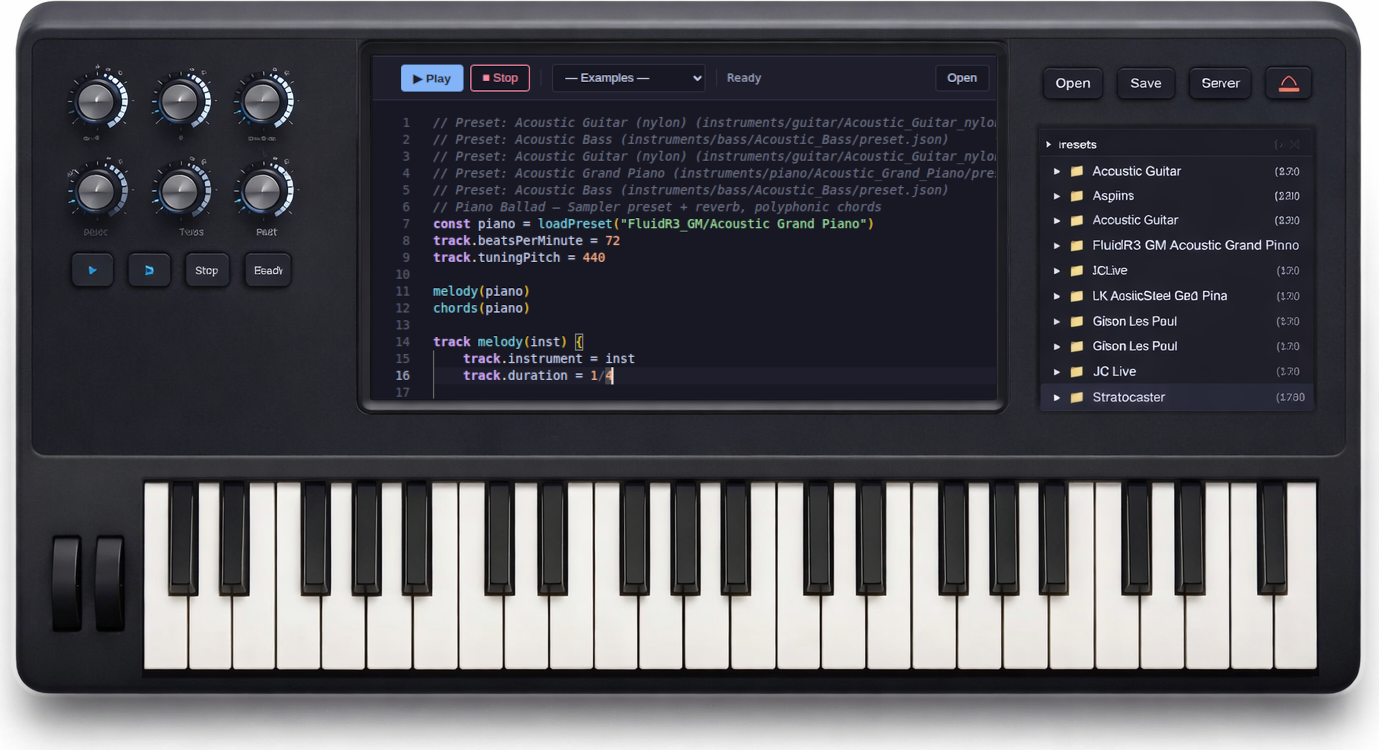

Keyboard Prototype

We're building a performance-grade hardware keyboard instrument running the SongWalker synthesis engine in Rust on embedded hardware. The goal is an instrument that boots instantly, never crashes on stage, and delivers deterministic, glitch-free audio.

Why Hardware?

SongWalker already runs as a web editor, a VST3 plugin, and a CLI tool — all powered by the same Rust engine. A hardware keyboard is the natural next step: an instrument that doesn't depend on a laptop, doesn't have OS update interruptions, and boots to sound-ready in under a second.

Instant Boot

Sub-second cold start. Sound-ready before you finish reaching for the volume knob. No OS, no loading screens.

Stage Stable

No OS crashes, no forced updates mid-performance. Embedded firmware with watchdog timer and safe-mode fallback.

Deterministic Audio

Hard real-time audio callback via DMA. No glitching even under heavy UI load — if using split-brain architecture, the UI can crash without affecting sound.

Same Engine, New Form

The same Rust DSP code that powers the browser editor and VSTi plugin — oscillators, sampler, effects, mixer — compiled to embedded firmware.

Recommended Platform: Daisy + Rust

Our recommended approach is Rust on Electro-Smith Daisy hardware

(STM32H750, ARM Cortex-M7 @ 480 MHz). The Cortex-M7 has a double-precision FPU, which

means the existing f64 DSP code in songwalker-core can run

without rewriting to f32 for the initial port. This dramatically

reduces porting effort.

Existing Engine Capabilities

The songwalker-core Rust crate already provides a full synthesis pipeline. Many modules are algorithmically portable to embedded as-is:

Oscillators

PolyBLEP anti-aliased sine, sawtooth, square, and triangle waveforms with detune support.

Sampler

Multi-zone sample playback with pitch shifting, loop points, linear interpolation, and velocity scaling.

Effects Chain

Chorus, stereo delay, Freeverb reverb, and dynamics compressor — applied as a master chain.

Voice Engine

Up to 64 simultaneous voices with ADSR envelopes, event-driven scheduling, and 128-sample block processing.

Biquad Filter

Lowpass, highpass, bandpass, notch, and peaking EQ — Audio EQ Cookbook implementation.

Preset System

JSON-based instrument presets with multi-zone key mapping, velocity layers, and composite instruments (layer/split).

Architecture: Split-Brain Design

The recommended architecture separates audio processing from UI rendering:

- Audio Brain (Daisy Seed): DSP engine, voice allocation, effects chain, MIDI handling, keybed scanning. Runs the hard real-time audio callback.

- UI Brain (ESP32-S3 or RP2040): Display rendering, preset browser, patch editor, meters. Connected via SPI with a compact binary protocol.

If the UI processor crashes or reboots, audio continues uninterrupted. For the initial prototype (v0), a single Daisy Seed with a small OLED display is sufficient.

All SongWalker Targets

The firmware keyboard joins the existing family of deployment targets — all sharing the same Rust core:

| Target | Platform | Audio Path | Status |

|---|---|---|---|

| Web Editor | Browser (WASM) | WebAudio API | Live |

| VSTi Plugin | Desktop (nih-plug) | DAW audio callback | Live |

| CLI Renderer | Desktop (native) | Offline WAV file | Live |

| Hardware Keyboard | Daisy (Cortex-M7) | DMA → codec | Planned |

Prototype Roadmap

Prove Rust DSP on Daisy (4–6 weeks)

Port oscillators, envelopes, voice engine, sampler, and effects to a Daisy Seed dev board. Validate polyphony, latency (< 3 ms), and stability over 48-hour soak tests. Use an off-the-shelf MIDI controller for note input.

Keyboard Form Factor (8–12 weeks)

Integrate an OEM keybed (25/37 keys), add a split-brain UI processor with color TFT, custom carrier PCB, and 3D-printed enclosure. Implement .sw file playback via the streaming SongRunner.

Founder Prototype Run (12–16 weeks)

Small batch (20–50 units) for early contributors. Refined enclosure, repeatable assembly, firmware update via USB drag-and-drop, and documentation. Targeting Crowd Supply or Kickstarter.

We're Open to Suggestions

This plan is a living document and we'd love your input. What key count

(25/37/49/61)? Knobs-first or touchscreen? MIDI DIN, USB, or both?

What features matter most for a performance instrument?

Open an issue or start a discussion on

GitHub

— we're actively shaping this based on community feedback.

The full technical plan is available in the

docs.

Editor

About

VSTi

Keyboard

GitHub

♥ Sponsor

Editor

About

VSTi

Keyboard

GitHub

♥ Sponsor